Reflections on GenAI, operationalizing AI, and the role of education from a week in Europe

I spent the last week speaking at and hanging out with a few thousand startups, SMBs, and enterprises at World Summit AI in Amsterdam, having in-depth technical conversations with leaders of some of the biggest enterprises in London and Madrid that are leaning into AI. I have also been taking some time to reflect, read, and think deeply by the Thames in Richmond.

Here are 5 key takeaways:

1. GenAI conversations are maturing, moving from early experimentation to considerations for moving and maintaining GenAI apps in production.

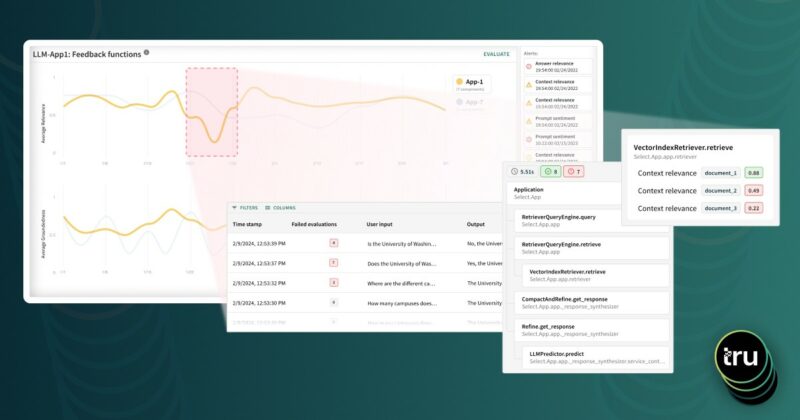

In this context, teams are carefully thinking through the right tech stack for their purposes: Which LLMs should we use? Which vector databases? Which kind of advanced RAGs and agents make sense for our purpose? What tooling should we set up as we make the journey from experimenting in development to production? How should we evaluate, debug, and monitor our GenAI apps across their lifecycle? What does an MLOps stack look like?

2. Teams are thinking carefully about the business value from GenAI apps in production.

User experience and cost are key considerations that repeatedly came up in that context.

3. Trustworthiness is back in the spotlight.

Are GenAI apps for question-answering, summarization, agents, and more producing reliable answers? How do we guard against hallucinations? How do we ensure the appropriateness of responses, taking into account considerations of safety, toxicity, fairness, and more? This is being driven primarily by business considerations, although regulatory developments, especially with the EU AI Act, is another driver. This area has seen significant research progress and transitions from research to practical tooling.

4. Operationalizing and industrializing the adoption of AI models with end-to-end AI tech stacks continues unabated.

This includes the previous generation of AI models (XGBoost, BERT and more) in addition to the latest GenAI models and apps. Scalability, ease of integration, and functional capabilities for training, inference, and observability are key in this context.

5. AI education and training are important areas of focus.

The rise of GenAI has led to significant changes in the pipelines for building, deploying, and observability for models and apps, thus creating a strong need for education and training.

It’s an exciting time for our field as we see significant research advancements, rapid transitions from research to open source and scalable products, coupled with open education initiatives that are reaching millions world wide and creating a community that can bring this technology to have a positive impact on the world. For me, it’s exciting to be part of all these facets of the GenAI movement via TruEra and various education initiatives at Stanford University, DeepLearning.AI, and TruEra’s AI Quailty Workshop course on Udemy.