Learnings from MLConf SF 2022

A core theme that emerged at MLConf 2022 San Francisco was that ML systems are complex, and many people are crucial to create a single ML model. It takes multiple actors across domains to successfully deploy a model, and it can be difficult for these personas to collaborate without common platforms or tools.

Many stakeholders and contributors impact a model across its lifecycle

To illustrate this, let’s walk through an example. Suppose a retail company has an ML model in production that recommends clothing to users, and a data scientist wants to update the architecture to use a neural network. To do this, the challenger model needs to be tested against the model that is currently in production, and ideally on real-world data. First, a product manager might sit down with a business team to understand the key objectives and metrics used to evaluate the model. The data scientist will need help from a data engineer who owns the prod data infrastructure to pull production data into an easier format for experimentation either locally or in some dedicated ML experimentation infrastructure, which is maintained by an ML engineer. Another ML engineer might work with the data scientist to understand how to host and deploy the new model, and an entirely separate MLOps engineer is responsible for monitoring the new and old models over time.

In the last few sentences, you were introduced to a handful of personas that are involved in a machine learning model’s lifecycle. Dr. Ali Arsanjani spoke to this exactly during his talk– as a director at Google Cloud, he has to interact with various roles in the process of model building: PMs, data analysts, data engineers, data scientists, ML developers, and ML engineers.

Different personas have different perspectives – communication is key

Yet despite these personas working on the same product – creation and deployment of this model – they have fundamentally different goals. A data scientist might be focused on optimizing an accuracy metric on the historical data that they are provided, whereas a product manager is more interested in how the model translates into better KPIs while still meeting regulatory requirements. This was a point underscored by Armand Ruiz from IBM Data Science. He noted that IBM’s own customers struggle with communication between these personas; as one client put it, “Data science teams do not want to understand regulations. They want to use technology which can translate regulatory requirements into something [they] understand.”

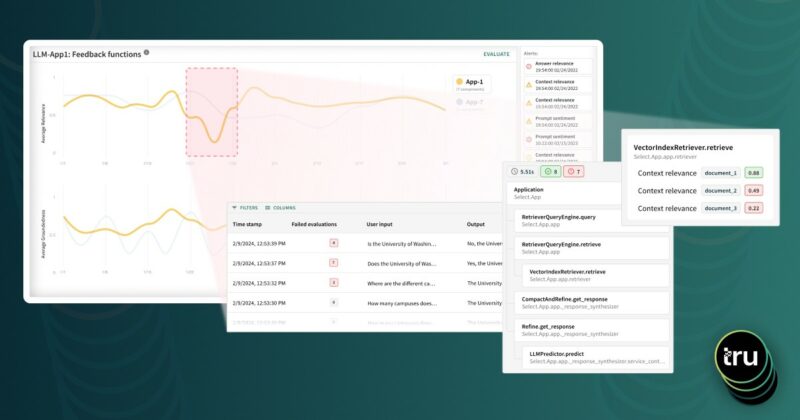

Shared analytics and shared tools can ease communication friction

TruEra’s AI Quality solutions help to tackle this by enabling smoother communication across teams and people within the platform. Users can comment on particular trends and highlight model behavior to others – such as a data scientist telling a data engineer that a particular categorical feature suddenly has a lot of previously-unseen values. Users can also generate reports for different workflows to stitch together a complete story; a model risk management team might conduct a fairness analysis on a new model, and then send that report directly to a business leadership team for review. This way, data scientists, engineers, PMs, etc. can use their own custom tools for their own jobs (model development, engineering, etc.) but the evaluation is centralized.

Jonathan Jin highlighted this during his talk – as a senior engineer at Spotify, he works on Hendrix, which is Spotify’s in-house ML platform. He spoke about meeting personas “where they are at” whether as an ML engineer or a data scientist, and that it took a while for Hendrix to evolve as a unified platform that could still be used by engineers and analysts across various teams. In Spotify’s case, generic all-encompassing ML platforms from major cloud platforms weren’t preferable, since they are focusing on ease-of-use for their users, which in many cases require custom solutions.

The future is a shared endeavor- more collaboration, more communication

Looking at the trend, it is clear that model development is no longer a process that can be done in isolation by a single person/team. Many personas have a stake in an ML model and as such there is a need to have a central place to evaluate and collaborate on models. As more and more companies adopt machine learning, it is prudent to carefully plan for how to achieve this in their organization.

TruEra’s latest version of TruEra Diagnostics includes automated ML testing and debugging. You can read more about it here in this blog – “ML Testing and Debugging – the Missing Piece in AI Development”

Authors: Divya Gopinath and Arri Ciptadi